Intro to Differentiable Swift, Part 1: Gradient Descent

In Part 0 of this series, we introduce the usefulness of automatic differentiation.

If you’ve defeatedly come to accept that those years of calculus classes were a complete waste of time, prepare for a pleasant surprise! They actually were useful!

(For those who don’t feel that way, you probably already know what gradient descent is. You can skip to Part Two.)

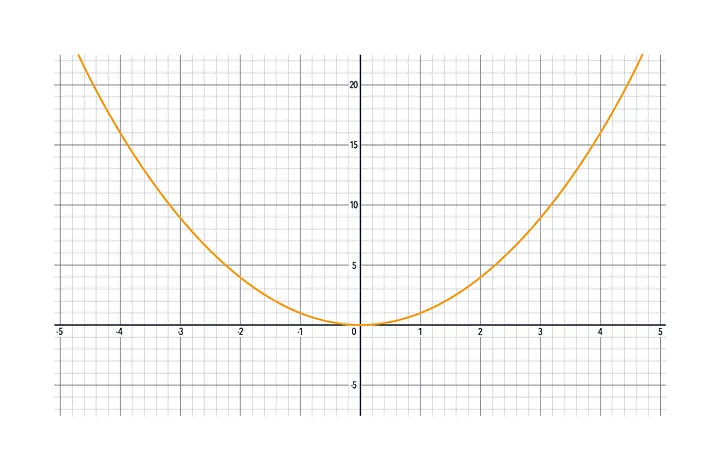

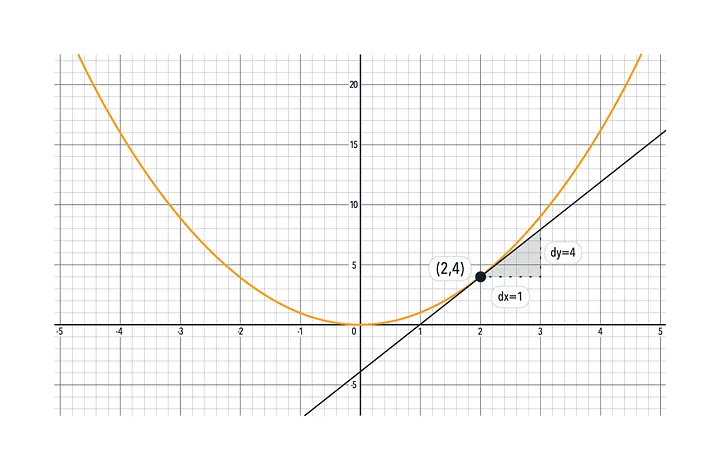

Here’s a function you’ve seen before in your math classes, and probably a lot since then. It’s f(x) = x²:

If you did take some calculus, you’ll remember that the derivative of x² is 2x. If you didn’t… the derivative of x² is still 2x. There, saved you a few months and a few thousand dollars :)

What is a derivative? It represents change. Change is important.

If you knew how to change the output of a function to something you liked, you could, well, change it to something you liked. If the function were more interesting than x², you might care about that.

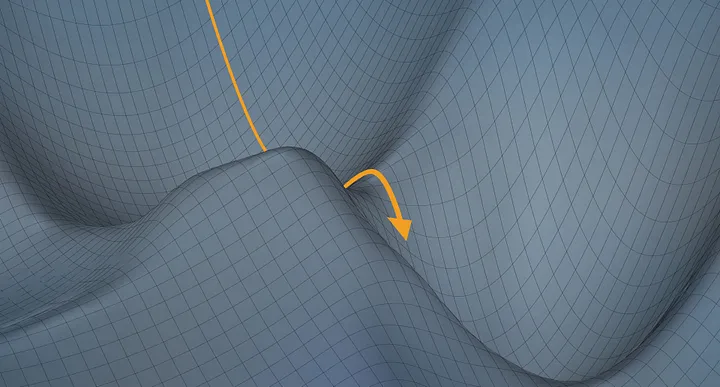

With the function x², it’s easy to look at the graph and point to a place you like. But if the function were more complex, with more inputs and outputs, you might not even be able to make the graph without spending years of CPU cycles. This is why efficient optimization is important. It’s the attempt to get to a place you like without seeing the whole graph.

For the rest of this tutorial, we’ll keep talking about x². But in your mind, think of it as a more interesting function. One with as many inputs and outputs as you want. One that calculates something useful. Like an image processing operation, or physics calculation, or enemy AI decision in a computer game.

Okay, let’s get into it!

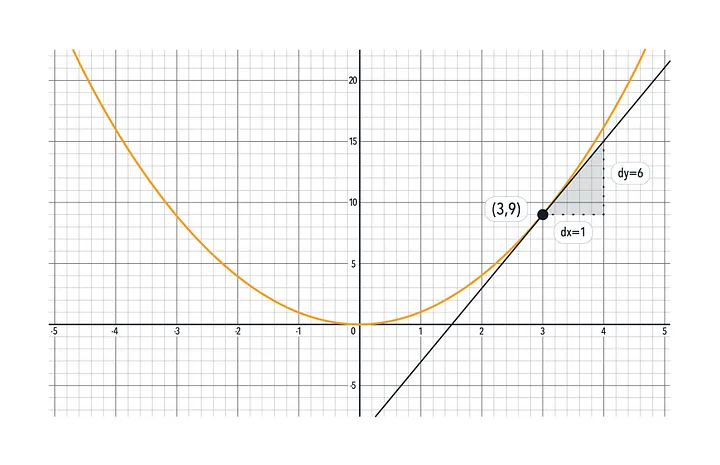

The derivative of a function tells you approximately how much the output will change if you add 1 to the input.

For x², the derivative is 2x. This tells us how much an adjustment to the input will change the output at any given point.

For example, at the point x = 3: The derivative is 2x, and x is 3, so the derivative is 2(3), or 6.

The derivative is also called the “slope”, “rise over run”, or the “tangent” or “tangent vector”. It’s the slanted black line above.

So, if we add 1 to the input, the output will go up by about 6.

The derivative only tells you the slope at the exact point x = 3. As you move away from x = 3, it may change depending on the function. In our case, it becomes steeper above x = 3 and shallower below it. Not too much though, you see on the graph that if we step by one up to x = 4, the function is pretty close to 15, which is what the derivative predicted (9 + 6 = 15).

Now, imagining this was a function you cared about, what if having f(x) evaluate to zero meant something important (like if the output was a loss/cost)? You could get there starting from x = 3!

The derivative tells you which way points uphill. We know that at x = 3, if you increase x by one, then f(x) will go up by 6. We want f(x) to go down however, towards zero. So let’s move x down by one instead of up. From 3 down to 2. That should change f(x) by about -6.

Now f(x) is 4! We’re way closer to f(x) being zero now! (It was at 9 for x=3).

If we get another derivative, this time at x = 2, we can take another step closer to 0: The derivative is 2x, and x is now 2, and so the slope is 4.

So if x increases by one, the output will increase by 4. Let’s go in the opposite direction again. Another step, from 2 to 1, takes the output from 4 down to 1!

We could continue taking steps like this until f(x) was 0. That’s gradient descent!

By the way, even though a function like f(x) = y is simple and easy to understand, everything we’ve reviewed here works the same for f(x,y) = (a, b), or f(x,y,z) = (a, b, c, d, e, f, g), or even f(x) = (w, a, i, t, m, a, t, h, w, a, s, c, o, o, l, a, l, l, a, l, o, n, g, ?) or whatever combination of # of inputs and # of outputs your function has. The graphs of these functions might become impossible to visualize, but the math is all the same. For example, in the case of f(x, y) = z, the function f(x, y) will still have a derivative, it’s just that there are two of them, one for x and one for y (each called “partial” derivatives).

In Part Two, we’ll get an introduction to Differentiable Swift, which can give you derivatives to normal functions you write (for free!).