15 Years of the iPhone App Store: The Story of Molecules

The iPhone App Store launched 15 years ago, and transformed the way that we make, use, and obtain software. More personally, it had a tremendous positive impact on my life. I had one of the first 500 applications that launched with the store that day, an open source 3D molecular modeler called Molecules.

The reception of the Molecules app went far beyond my wildest dreams. I made many friends, met my amazing wife, and took my career in a completely different direction, all as a result of this one little project.

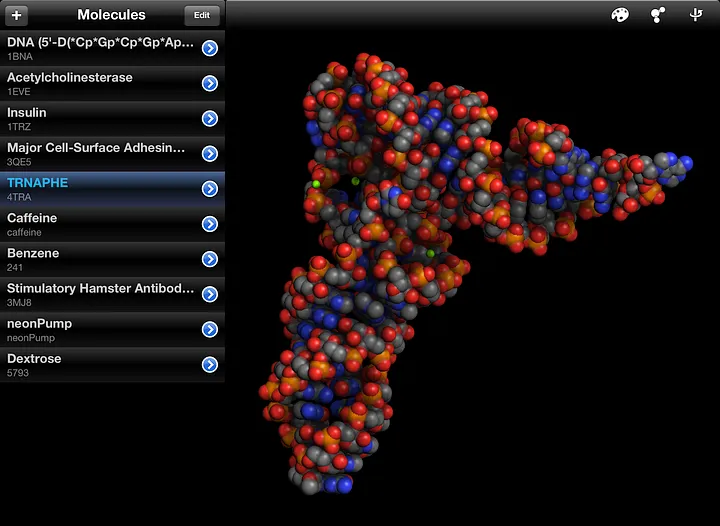

Molecules was an application for iPhone (and later iPad and Mac) that let you render and manipulate full 3D models of molecular structures right on your mobile device. While we take advanced 3D graphics on our phones for granted nowadays, at the time this had never been done before. Molecules also let you search for and download biomolecules from the RCSB Protein Data Bank, as well as small molecules from the NIH’s PubChem.

The interface for Molecules on iPad, showing a 3D rendering of a transfer RNA molecule.

It all came about due to a request by my brother. At the time he was a researcher working toward his Ph.D. in protein crystallography, and had just come back from a poster session. I heard him complaining about how you couldn’t really show off a new 3D protein structure on a flat printed poster, and he couldn’t hold a laptop in his hands through the entire poster session. There had to be a better way to do this.

I’ve been excited about handheld computing since the U.S. Robotics Pilot, and as soon as I bought the first iPhone, I jailbroke it to begin developing with the private Cocoa Touch interface on the device. I was floored by the announcement of the beta iPhone SDK, especially the previously unknown OpenGL ES capabilities of the device. At Apple’s Worldwide Developers Conference in 2008, while watching a presentation on how OpenGL ES worked on the iPhone, I started thinking more about my brother’s problem and wondered if I could actually pull off a molecular renderer on this little handheld computer.

I came back from the conference and immediately dove in, devoting every night and weekend to learning OpenGL ES and building prototypes. I started believing this could actually work, and rushed to get an application ready before the App Store submission deadline. It was both exhilarating and nightmarishly stressful to be one of those first developers working with the beta iPhone SDK before launch. For how unbelievably broken some things were back then, all of us early developers got our apps built, signed, and submitted through sheer force of will.

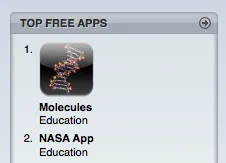

It’s hard to describe the pride I felt at seeing my little app’s name and icon on the App Store when it went live. I had made something that anyone in the world could download and use. And they did, in numbers I couldn’t have imagined. Molecules was featured on the front page of the App Store multiple times, downloaded over 3.5 million times before I stopped getting statistics (we have much better information in the modern App Store), and even hit #1 in the Education category for a little while:

Molecules made it (briefly) to the top of the Free Apps in the Education category on iTunes.

I still smile when I see the icon for it in the original Apple ads for the iPhone 3G:

After a little while, Apple announced another new handheld device: the iPad. That larger canvas seemed like the perfect place for a molecular modeler, so I once again worked hard to adapt my iPhone application to this new platform. It was there at the launch of the iPad App Store, and even became one of the standard apps loaded on demo iPads at the Apple retail stores. I can’t tell you how honored I was to see it on the late Steve Jobs’ iPad on stage:

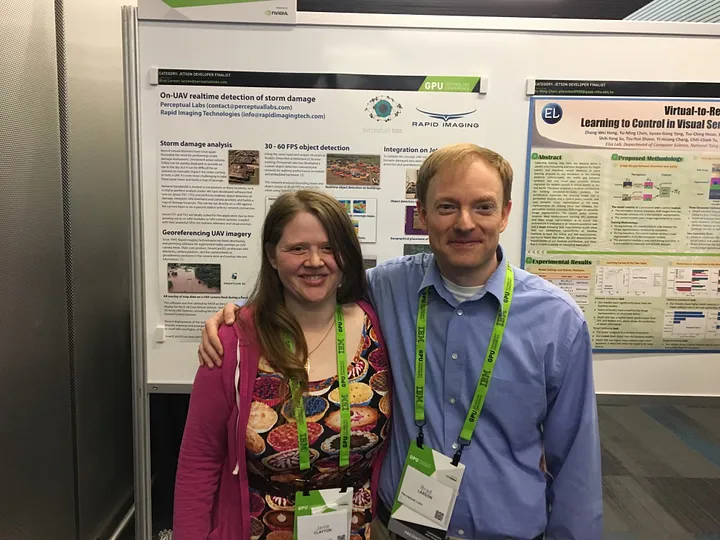

At one point, I was approached by the experienced educators behind the company Digital World Biology who wanted to try for a grant to expand Molecules into a real teaching tool for classrooms across the world. The grant was funded by the NSF, and they brought on a brilliant local iPhone developer named Janie to work with me on the project. She and I got along terrifically, and after the grant was done, she joined my company to work on robotic control software. We eventually realized that we brought out the best in each other, and Janie and I will have been married for five years this September.

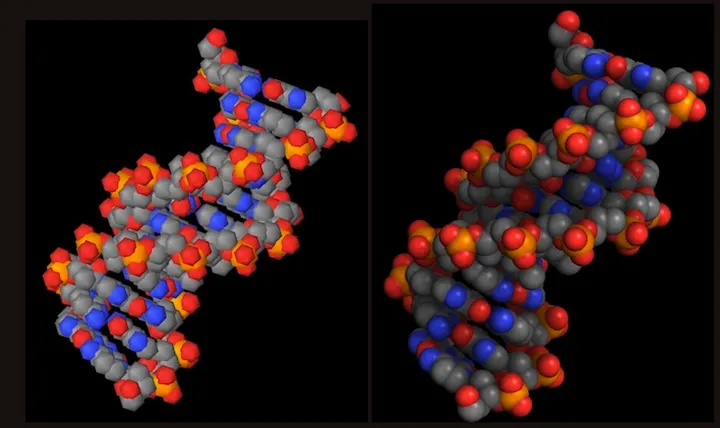

The iPad also had new hardware rendering capabilities, going beyond the fixed-function pipeline of OpenGL ES 1.0 to the programmable shaders of OpenGL ES 2.0. This opened up whole new rendering techniques, and I became determined to adapt a recently-published technique by Tarini and coworkers to mobile devices. That was an incredibly fun and educational journey, ending up in a conference paper and a nice mention onstage in WWDC 2011’s session “What’s New in OpenGL ES”.

The old OpenGL ES 1.0 renderer (left) and the extremely realistic new OpenGL ES 2.0 renderer (right).

I soon went deep down the rabbit hole of GPU-accelerated image processing, which first took me to machine vision and then eventually to machine learning. The combination of Swift and machine learning lured me to the incredible Swift for TensorFlow team at Google, and then here to PassiveLogic.

Unfortunately, I got so busy with all the new things I was working on that I had less and less time to maintain Molecules over the years, and it eventually fell into disrepair. That had eaten at me, so I recently started rewriting it from the original Objective-C, UIKit, and OpenGL ES to modern Swift, SwiftUI, and Metal. Going through that process is showing me both how far technology has advanced in the last 15 years as well as how much I’ve grown as a developer. I‘m having a lot of fun, and hope to have it back on the App Store soon.

My pug Bucky is helping me get my Metal rendering correct in my SwiftUI interface.

I truly am grateful for the opportunity I had to be there at the start of something special 15 years ago. I have a chance to work with outstanding people on fascinating technology every day at PassiveLogic in large part because of what came out of my little open source project. To say nothing of the wonderful wife I might have never met without this.

Even though my focus at PassiveLogic is on our deep technology stack, primarily how we can advance the Swift programming language to support generative autonomy, I also love working with our top-notch iOS development team. We’ll have something really exciting to show off soon that combines 3D rendering with the latest in spatial computing.

It’s thrilling to be there at the launch of a new platform, and I’m hoping that the one we’re building here will give my younger coworkers the same sense of pride and awe that I had 15 years ago.